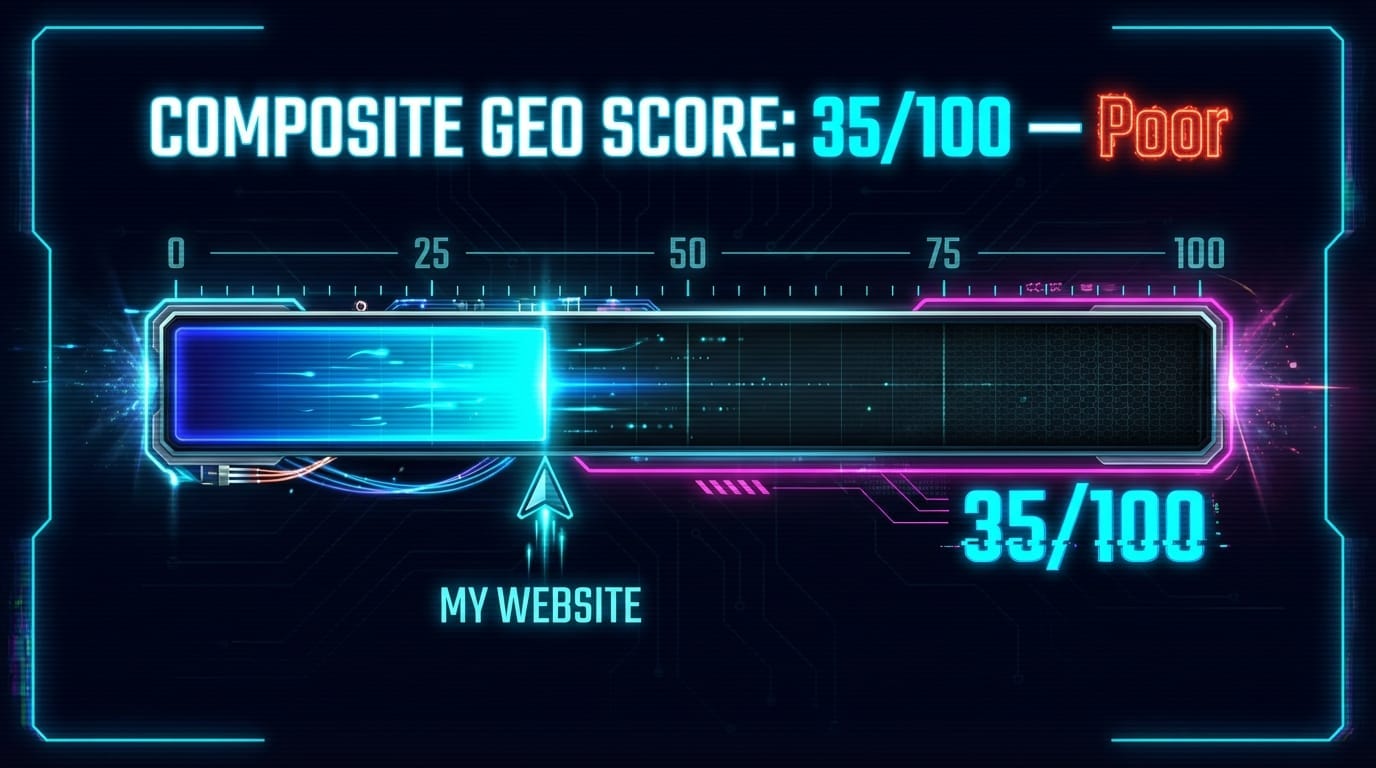

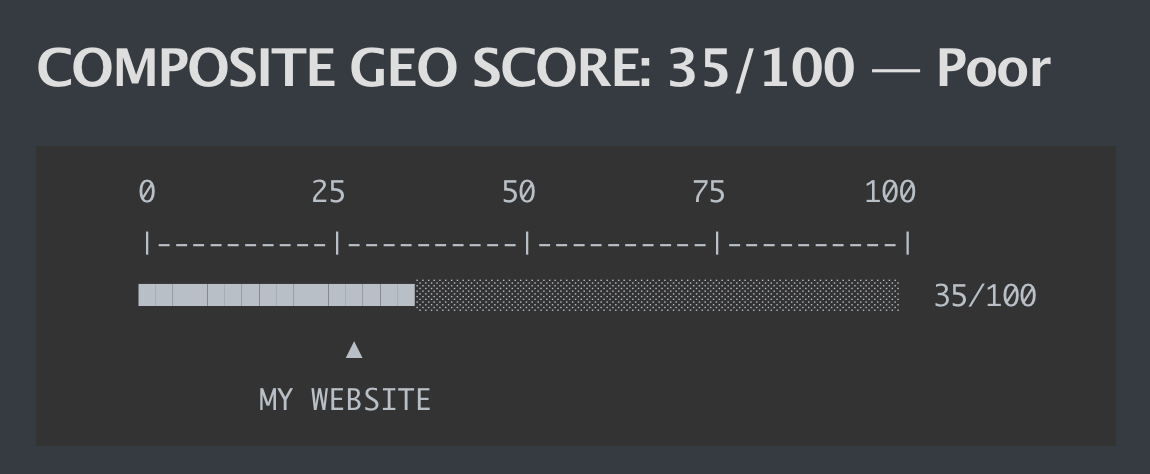

I Ran a GEO (Generative Engine Optimization) Audit on My Website. Got 35 Out of 100

That number hurts less than the reason for it.

A couple of weeks ago I stumbled on an open-source project called geo-seo-claude. GEO stands for Generative Engine Optimization — the practice of making your website visible to AI-powered search engines rather than just Google’s traditional crawler. The tool is a set of Claude Code skills that runs a full AI-visibility audit on any URL. Free, open-source, no API costs beyond Claude Code itself.

Install it for yourself: ttps://github.com/zubair-trabzada/geo-seo-claude

I ran it on my website.

35/100. Poor.

Here’s the thing, though. The score itself wasn’t the most interesting part. What got me thinking was how the tool works under the hood.

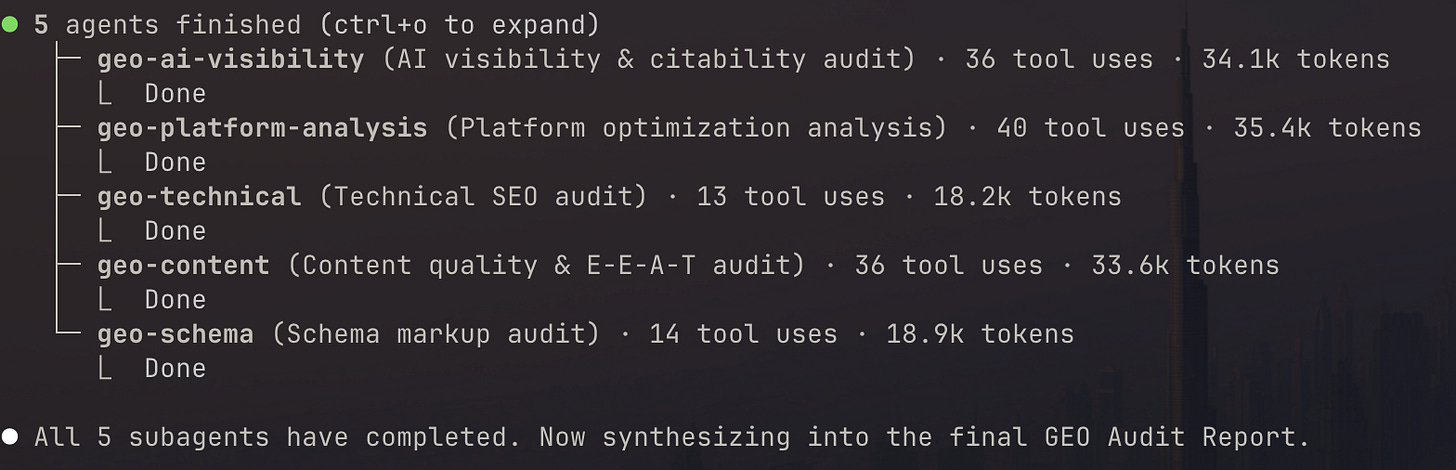

Five agents, one audit

When you run /geo audit <url>, the tool doesn’t work sequentially. It spawns five parallel subagents, each with a different specialty:

AI Visibility agent — checks citability scores, AI crawler access, brand mentions across platforms

Platform Analysis agent — tests readiness for ChatGPT, Perplexity, and Google AI Overviews separately, because each platform weighs signals differently

Technical SEO agent — crawlability, security headers, Core Web Vitals risk, server-side rendering

Content Quality agent — E-E-A-T signals, content freshness, expertise attribution

Schema Markup agent — structured data detection, validation, and gap analysis

They run simultaneously. A synthesis layer then aggregates the scores into a composite GEO Score with weighted categories. The whole thing takes a few minutes.

The architecture is interesting because it mirrors how a consulting team would approach an audit — not one generalist doing everything sequentially, but specialists working in parallel. Except this costs you nothing and doesn’t require a kickoff call.

What the tool found on my website is a useful illustration of why this matters.

The robots.txt lies

My website’s robots.txt file was politely inviting every AI crawler in. GPTBot — welcome. ClaudeBot — come right in. PerplexityBot — door’s open.

The WAF (web application firewall) was having none of it.

Every non-browser request hit a 429 error with a JavaScript challenge. Policy said one thing, infrastructure said another. The result: every major AI search engine treated the site as if it didn’t exist. The sitemap was blocked too, so AI crawlers couldn’t even discover what pages were there.

That’s the kind of mismatch that makes every other optimization pointless. Perfect schema markup, clean E-E-A-T signals, a beautifully crafted llms.txt file — none of it matters if the crawler can’t get through the door.

Fix the firewall first. Everything else is noise.

The score breakdown

AI Citability & Visibility: 28/100

Brand Authority Signals: 35/100

Content Quality & E-E-A-T: 42/100

Technical Foundations: 34/100

Structured Data: 38/100

Platform Optimization: 34/100

Content scoring highest is both reassuring and slightly annoying. It means the substance is there — the actual expertise and information exists on the site. But the infrastructure and signals are failing it. Like having good products in a store with a locked door and no signage.

Why GEO is actually different from SEO

Traditional SEO optimizes for crawlers that index pages and return ranked links. You rank, users click, users arrive.

GEO optimizes for AI systems that consume your content and synthesize answers. If an AI doesn’t crawl you, doesn’t cite you, doesn’t have you in its knowledge base — you don’t exist in its answers. No ranking. No link. Just absence.

The numbers behind this shift are not subtle. AI-referred traffic grew 527% year-over-year. Gartner projects a 50% drop in traditional search traffic by 2028. Brand mentions correlate 3x more strongly with AI visibility than backlinks. And only 23% of marketers are actively doing anything about it.

That last number is the window. Most websites are currently invisible to AI search. Mine was one of them.

What gets fixed first

The tool outputs a prioritized action plan, not just a score. The top item was obvious once identified: whitelist AI crawlers in the WAF configuration. Low effort, critical impact. Everything else waits until that’s done.

After that: LocalBusiness schema for every location, comprehensive sameAs entity linking across platforms, author attribution on all content pieces.

Estimated score after Phase 1 fixes: 50-55/100. Not excellent. But visible — which is the baseline requirement for everything else to work.

The honest takeaway

If you’re a marketer and you haven’t thought about AI visibility yet, you’re optimizing for a distribution channel that’s quietly ceding ground. The users are moving. The traffic patterns are changing. The tools to audit this are now free.

Run the audit. The score might sting. The clarity is worth it.